The Virtual Stereosonic Task is a navigation-based assay that allows the evaluation of Sensory Substitution Devices. It allows assessing the effectiveness of auditory SSDs to convert spatial information into reliable auditory navigational information.

The virtual task allows the creation of environments that are authentic to the real-world while allowing the investigator to control the navigational difficulty. The virtual task also reduces the stress and anxiety associated with navigation among the visually impaired or blind.

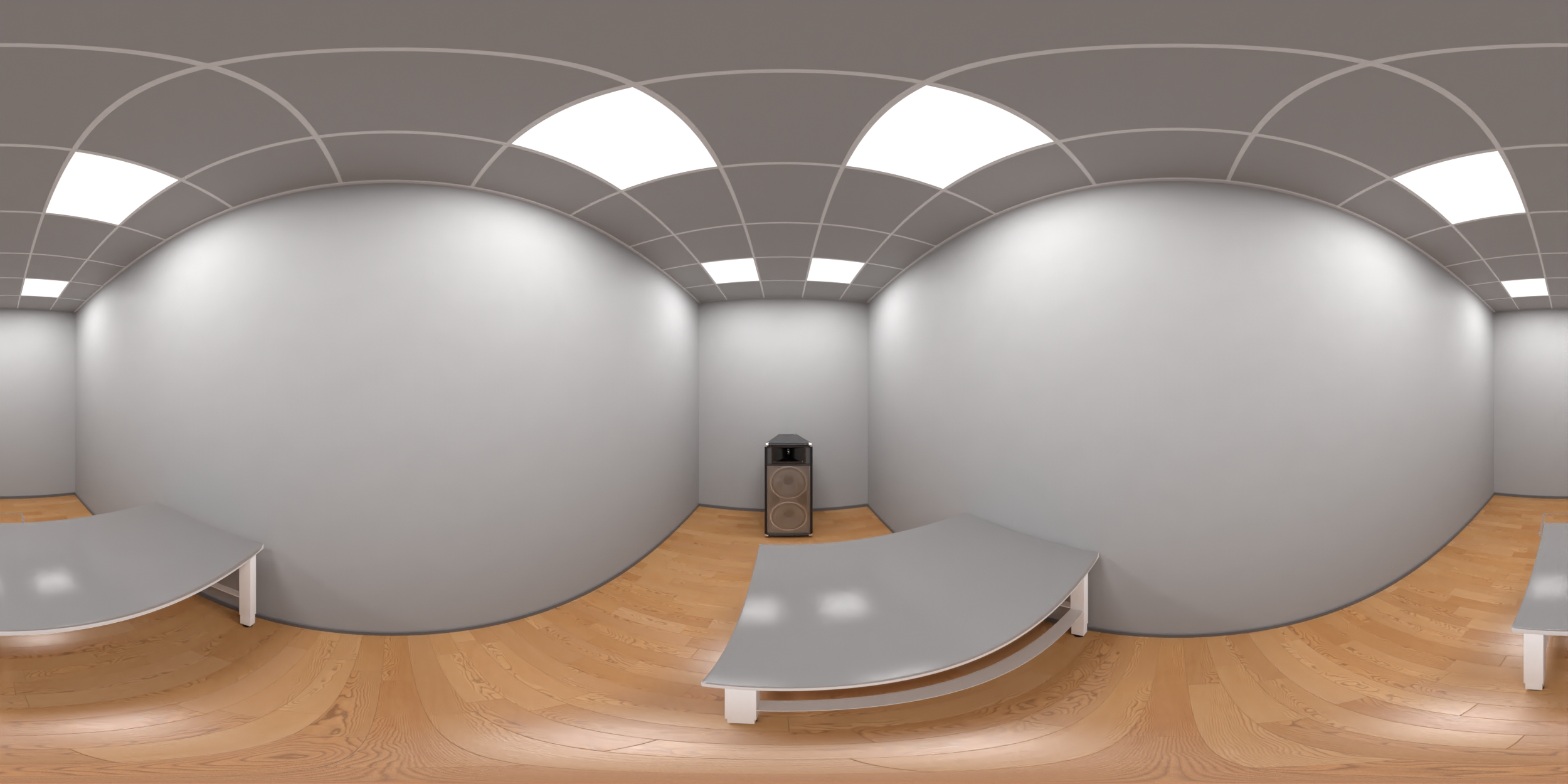

Mazeengineers offers the Virtual Stereosonic Task Apparatus.