In clinical settings, measurements are often designed to assess the effect of a new drug and/or treatment on a specific outcome, such as the frequency of symptoms. In practice, however, physical, emotional, cognitive, and social factors mix into one. Consequently, researchers can never be certain if the effect of the factor they measure is definite and independent or if it is affected by other exposures.

Choosing the outcome measurements becomes paramount. Often associations and connections between variables and outcomes are affected by nuisance factors, which may lead to bias and misinterpretations. Confounders and effect-modifiers are two types of nuisance factors or co-variates that researchers need to consider and reduce, either at the design stage or the data analysis stage (Peat, 2011). One of the best ways to minimize the effects of such factors is to conduct a broad randomized controlled trial. Apart from the study design, there are suitable statistical procedures that can benefit each study and reduce external nuisance.

Confounders are common co-variates, which are defined as risk factors related to both the exposure and the outcome of interest (without lying on the causative pathway) (LaMorte & Sullivan). In other words, confounders lead to distortion in the measurement of the associations between the variables of interest. To avoid critical misinterpretations, experts need to investigate all possible confounders (Peat, 2011).

A clear example of confounding is the study of birth order and the risk of Down syndrome conducted by Stark and Mantel (1966). The research team suggested that there was an increased risk of Down syndrome in relation to birth order: the risk was estimated as higher with each successive child. However, the fact that birth order is linked to maternal age cannot be ignored. Even if the sibling gap is small, it’s logical to assume that maternal age increases with each successive child. In fact, maternal age proved to be a crucial factor.

Another example of confounding is patients’ history of smoking in relation to heart diseases and exercising. It’s been proven that smoking itself increases the risk of heart disease. At the same time, smoking is linked to exercising: usually, people who exercise on a regular basis smokeless. Thus, when experts analyze the association between heart disease and exercising, they need to consider the history of smoking (Peat, 2011).

Also, age can be a significant confounder. For instance, age can affect the relationship between physical activity and heart problems. Older people are usually less active, and at the same time, older people are at greater risk of developing a disease.

Confounders need to be reduced as they may lead to over-or under-estimation of factors. Over-estimation is the phenomenon of establishing a stronger association than the one that actually exists. On the other hand, under-estimation is the error of finding a weaker association than the real one. Removing the effects of confounders can be achieved at the design stage or the data analysis stage (Peat, 2011). Considering nuisance at the design stage is usually better, and as a matter of fact, it’s extremely important in cases prone to bias, such as case-control, cohort, cross-sectional, and ecological studies.

In fact, one of the best ways to control for confounders is the use of large randomized trials. Otherwise, if experts let the subjects allocate themselves to different groups, this may lead to selection bias and may affect the confounding effect. Let’s explore the study which investigated the connection between heart issues and exercising again. If experts let subjects self-allocate to an exposure group, often smokers would allocate themselves to a low exercise frequency group. Thus, smoking could become a significant confounder (Peat, 2011).

Sometimes mathematical adjustment of groups may be needed to reduce the uneven distribution of confounders. Controlling for confounders at the data analysis stage, however, is less effective. Note that it may include restriction, matching, and stratification.

A powerful method is to perform a stratified analysis. Stratified analyses require the measurement of confounders in subgroups or strata. The effect of a confounder can have more than one category, which is known as a stratum. All levels should be analyzed; for instance, there might be different analyses for each gender (Peat, 2011). If the stratified estimates differ from the total estimate, this shows a confounding effect. For instance, a study on the connection between living areas (urban and rural) and chronic bronchitis used a stratified analysis. The study showed that smoking is a confounder in the relationship between rural areas and bronchitis, and in fact, smoking may be more prevalent in urban areas.

The stratified analysis requires mathematical adjustments, and therefore, the sample size is also crucial. In large samples, confounders may seem statistically significant while in real life, there are not of clinical importance. On the other hand, small samples might not reveal statistical significance while in reality, confounders affect the results. Thus, confounders with an odds ratio, which is the association between exposure factors and outcome variables, of more than OR=2.0 should always be considered (Peat, 2011).

Multivariate and logistic regression analyses also minimize the effects of confounders. What’s more, they are two of the most powerful statistical procedures

While confounders affect both the exposure and the outcome, effect modifiers are associated only with the outcome (“The difference between ‘Effect Modification’ & ‘Confounding,’” 2015). Effect modifiers or interactive variables affect the causal factor of an outcome. It’s also about stratification – or when exposure has a different outcome in different subgroups. For instance, when a new drug has a different effect on men and women.

Effect modifiers, however, are not a nuisance because they can give valuable insights. In fact, they can be easily recognized in each stratum (Peat, 2011). The age difference and the risk of a disease can be clear examples of effect modifiers. For instance, when the risk of disease is estimated for different age strata, in many studies, it’s visible that this risk increases with age.

Stratified analysis can be a beneficial way to analyze effect modifiers. Let’s take a sample stratified by smoking and analyze the relationship between smoking and the risk of myocardial infarction. In this case, blood pressure acts as an effect-modifier. Note that it’s been proven that the risk of myocardial infarction is higher in smokers with normal blood pressure than in smokers with higher blood pressure (Peat, 2011).

However, when there are more than a few effect modifiers, multivariate analysis is better. Note that confounders and effect-modifiers are treated differently in multivariate analysis.

In fact, the size of the estimates or the β coefficients are always vital (Peat, 2011). Also, note that when the outcome variable is dichotomous, a larger sample is needed to provide meaningful results in the analysis.

Intervening or mediating variables are also crucial factors that experts need to consider. They are hypothetical variables that cannot be observed in practice (“Intervening Variables”). Thus, it is not possible to say how much of the effect is due to the independent variable and how much is due to the intervening variables.

A classic example is the study of the connection between poverty (independent variable) and shorter lifespan (dependent variable). We cannot say that poverty directly affects longevity, so an intervening variable, such as lack of access to healthcare, can be vital. Although intervening variables are an alternative outcome of the exposure factor, they cannot be included as an exposure factor. Simply because, as explained above, they are hypothetical constructs (Peat, 2011).

As intervening variables are closely related to the outcome, they may distort all multivariate models (Peat, 2011).

An example is a study on the development of asthma. In this case, hay fever will be an intervening factor as hay fever is part of the same allergic process as the development of asthma (for instance, exposure to particles, such as pollens). Thus, hay fever and asthma have strong associations.

Deciding if a factor is a confounder, an effect-modifier or an intervening variable requires careful consideration and good data analysis.

Note that all factors can be categorical or continuously distributed. In any case, misinterpretation of variables and associations may lead to bias.

Research should eliminate errors and bias because it can affect people’s well-being and lead to fatal outcomes.

Intervening Variables. Retrieved from http://www.statisticshowto.com/intervening-variable/

LaMorte, W., & Sullivan, L. Confounding and Effect Measure Modification. Retrieved from http://sphweb.bumc.bu.edu/otlt/MPH-Modules/BS/BS704-EP713_Confounding-EM/BS704-EP713_Confounding-EM_print.html

Peat, J. (2011). Choosing the Measurement. Health Science Research, SAGE Publications, Ltd.

Stark, C., & Mantel, N. (1996). Effects of Maternal Age and Birth Order on the Risk of Mongolism and Leukemia. JNCI: Journal of the National Cancer Institute, Volume 37, Issue 5, p. 687-698.

The difference between ‘Effect Modification’ & ‘Confounding’ (2015, June 4). Retrieved from https://www.students4bestevidence.net/difference-effect-modification-confounding/

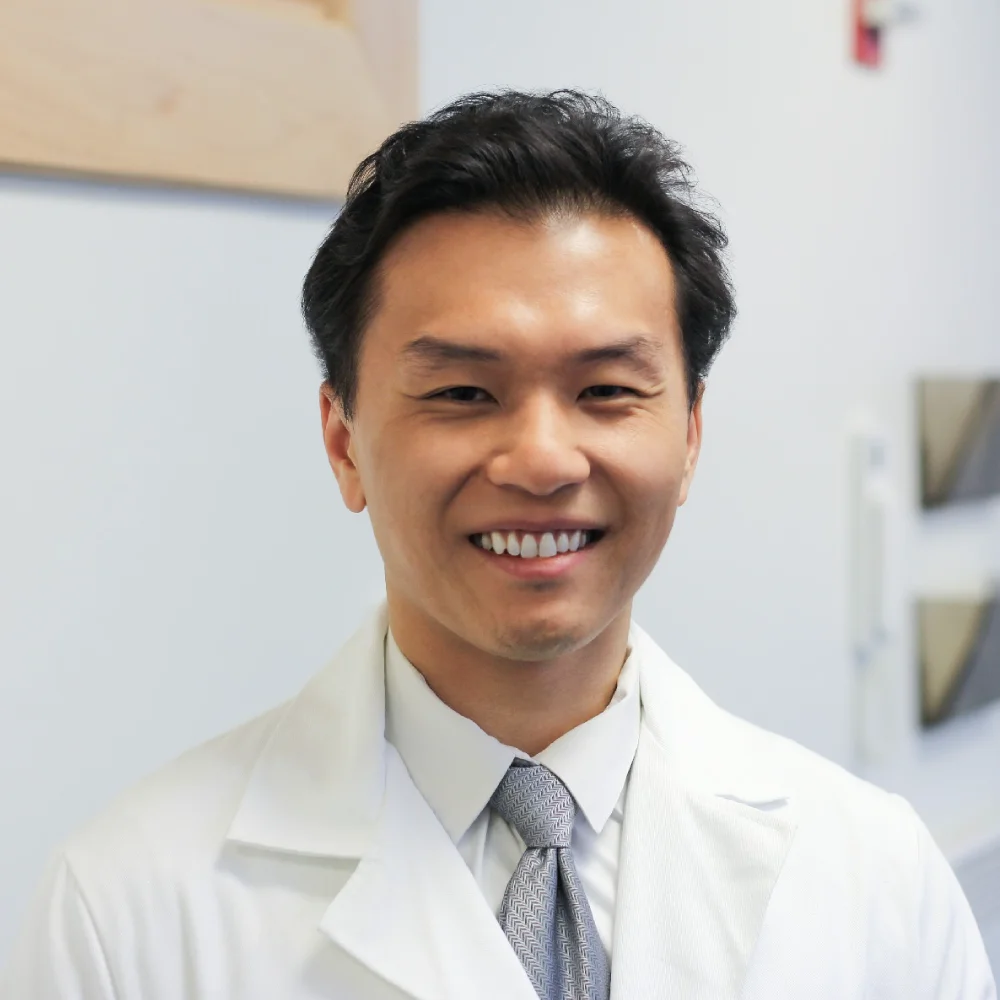

Shuhan He, MD is a dual-board certified physician with expertise in Emergency Medicine and Clinical Informatics. Dr. He works at the Laboratory of Computer Science, clinically in the Department of Emergency Medicine and Instructor of Medicine at Harvard Medical School. He serves as the Program Director of Healthcare Data Analytics at MGHIHP. Dr. He has interests at the intersection of acute care and computer science, utilizing algorithmic approaches to systems with a focus on large actionable data and Bayesian interpretation. Committed to making a positive impact in the field of healthcare through the use of cutting-edge technology and data analytics.

Monday – Friday

9 AM – 5 PM EST

DISCLAIMER: ConductScience and affiliate products are NOT designed for human consumption, testing, or clinical utilization. They are designed for pre-clinical utilization only. Customers purchasing apparatus for the purposes of scientific research or veterinary care affirm adherence to applicable regulatory bodies for the country in which their research or care is conducted.